SAP S/4HANA

What is SAP S/4HANA?

SAP S/4HANA is an ERP business suite based on the SAP HANA in-memory database that allows companies to perform transactions and analyze business data in real time.

S/4HANA is the centerpiece -- or digital core -- of SAP's strategy for enabling customers to undergo digital transformation, a broadly defined process where they can modify existing business processes and models or create new ones. This allows the companies to be more flexible, responsive and resilient to changing business requirements, customer demands and environmental conditions. SAP refers to this S/4HANA-centered business environment as the intelligent enterprise.

S/4HANA features

S/4HANA was designed to make ERP more modern, faster and easier to use through a simplified data model, lean architecture and a new user experience built on the tile-based SAP Fiori UX. S/4HANA includes or is integrated with a number of advanced technologies, including AI, machine learning, IoT and advanced analytics. The SAP HANA in-memory database architecture and the integration of advanced technologies allow S/4HANA to help solve complex problems in real time and analyze more information faster than previous SAP ERP products.

The on-premises version of S/4HANA can also be deployed in public or private clouds or a hybrid. There is also a multi-tenant SaaS version, SAP S/4HANA Cloud, whose modules and features differ from those of the on-premises version.

History of S/4HANA

SAP released S/4HANA in February 2015 to much fanfare, with then-CEO Bill McDermott calling it the most important product in the company's history. S/4HANA stands for Suite for HANA, as the product was written to take advantage of HANA, which debuted in 2011. S/4HANA was completely rewritten for HANA, differentiating it from Business Suite on HANA, a version of S/4HANA's predecessor, SAP ERP Central Component (ECC), released in 2013 that ran on HANA.

SAP S/4HANA required rethinking the database concept and rewriting 400 million lines of code. According to SAP, the changes make the ERP system simpler to understand and use and more agile for developers. SAP sees S/4HANA as an opportunity for businesses to reinvent business models and generate new revenues by taking advantage of the internet of things and big data by connecting people, devices and business networks.

Also, because S/4HANA does not require batch processing, businesses can simplify their processes and execute them in real time. This means that business users can get insight on data from anywhere in real time for planning, execution, prediction and simulation, according to SAP.

Differences between S/4HANA and SAP ECC

S/4HANA shares many of the characteristics of previous SAP ERP products, up to and including ECC, but because S/4HANA was a redesign, it differs considerably from ECC in several areas. Fundamentally, S/4HANA is designed to take advantage of capabilities that are not available for ECC, such as advanced analytics and real-time processing.

Here are some of the main areas where S/4HANA differs from ECC:

- Database. S/4HANA only runs on HANA, whereas ECC can run on many databases, including DB2, Oracle, SQL Server and SAP MaxDB.

- Deployment options. S/4HANA has a wider array of deployment options, including on premises, public cloud, private cloud, hosted cloud and hybrid environments. ECC is primarily deployed on premises and can run in hosted public-cloud environments, but there is no specific public-cloud edition.

- User experience. S/4HANA uses the modern SAP Fiori UX, while ECC uses the older, standard SAP GUI, though it does have a limited number of Fiori apps. Fiori is a collection of commonly used S/4HANA functions that are displayed in a simple, consumer-ready tile design and can be accessed across various devices, including desktops, tablets and mobile devices.

- Advanced functions. S/4HANA is designed to take advantage of advanced technologies, including embedded analytics, robotic process automation, machine learning, AI and the SAP CoPilot digital assistant. These advanced capabilities are not available in ECC.

S/4HANA lines of business

In its earliest versions, S/4HANA was comprised of modules that each contained functionality for a distinct business process. The first module was Simple Finance, which streamlined financial processes and enabled real-time analysis of financial data. Later renamed SAP Finance, it helped companies align their financial and non-financial data into what SAP refers to as a single source of truth. Business Suite users often deployed SAP Finance as the first step on the road to S/4HANA.

SAP added modules and functionality in later releases, such as the following:

- S/4HANA 1511, released in November 2015, introduced a logistics module called Materials Management and Operations.

- S/4HANA 1610, released in October 2016, included modules for supply chain management, including Advanced Available-to-Promise; Inventory Management; Material Requirements Planning; Extended Warehouse Management; and Environment, Health and Safety (EHS).

S/4HANA subsequently reorganized the SAP ECC ERP modules into lines of business (LOBs) that are comprised of functions for specific business processes. The first LOB was SAP S/4HANA Finance, and other LOBs have been added with subsequent releases.

As of 2022, S/4HANA includes the following LOBs:

S/4HANA Finance focuses on all the financial aspects of a business, including financial accounting, controlling, treasury and risk management, financial planning, financial close and consolidation.

S/4HANA Logistics is a collection of LOB modules centered around processes for supplier relationship management and supply chain management, including the following:

- S/4HANA Sourcing and Placement, which is centered on capabilities needed to source and obtain raw materials for fulfilling production orders, including extended procurement, operational purchasing and supplier and contract management;

- S/4HANA Manufacturing, which focuses on the processes required to manufacture products, including responsive manufacturing, production operations, scheduling and delivery planning and quality management;

- S/4HANA Supply Chain, which focuses on end-to-end business planning and logistics processes, from pre-production to distribution to end purchasers, including production planning, batch traceability, warehousing, inventory and transportation management; and

- S/4HANA Asset Management, which focuses on maintenance processes for a company's fixed assets, from machine tools to plants, warehouses and other buildings, including plant maintenance and EHS monitoring.

S/4HANA Sales focuses on processes that are required to fulfill sales orders, including pricing, sales inquiries and quotes, promise checks, incompletion checks, repair orders, individual requirements, return authorizations, credit and debit memo requests, picking and packing, billing and revenue recognition.

S/4HANA R&D and Engineering focuses on the product lifecycle, including defining the product structure and bills of materials, product lifecycle costing, project and portfolio management, innovation management, management of chemicals or other sensitive materials used in development and health and safety regulatory compliance.

SAP later extended the digital core LOB capabilities to meet specific industry requirements. As of 2022, the industry segments were Consumer Industries; Discrete Industries; Energy and Natural Resources; Financial Services; Public Service; and Service Industries.

Each industry segment contains functionality for particular business requirements.

Consumer Industries

- Agribusiness

- Consumer Products

- Fashion

- Life Sciences

- Retail

- Wholesale Distribution

Discrete Industries

- Aerospace and Defense

- Automotive

- High Tech

- Industrial Manufacturing

Energy and Natural Resources

- Building Products

- Chemicals

- Mill Products

- Mining

- Oil, Gas and Energy

- Utilities

Financial Services

- Banking

- Insurance

Public Service

- Defense and Security

- Federal and National Government

- Future Cities

- Healthcare

- Higher Education and Research

- Regional, State and Local Government

Service Industries

- Cargo Transportation and Logistics

- Engineering, Construction and Operations

- Media

- Passenger Travel and Leisure

- Professional Services

- Sports and Entertainment

- Telecommunications

Advantages and drawbacks of S/4HANA

S/4HANA is a complex ERP system that is best suited for large, complex organizations, enabling them to standardize business processes across multiple geographic locations and corporate entities. It includes a broad package of capabilities that are focused on the complex business requirements of industries such as manufacturing, procurement, supply chain, distribution, retail and financial services.

SAP is the largest ERP vendor in global market share and revenue, so it invests heavily in research and development for S/4HANA. This means S/4HANA is at the leading edge of ERP functionality, integrating advanced technologies, such as AI, machine learning, industrial IoT, blockchain and advanced analytics.

Another advantage of S/4HANA is that it's built on the HANA in-memory database. This greatly improves processing speed and enables real-time analytics and transactions, which can be hugely important for organizations that require immediate financial reporting.

However, the complexity of S/4HANA can make it unsuitable for organizations that have relatively simple requirements. S/4HANA is expensive to implement and run, so it's best suited for organizations that have the resources to deploy it effectively.

Because S/4HANA has a significantly different architecture, data model and capabilities than previous SAP ERP systems like ECC, it can suffer from a lack of developers and administrators who have advanced S/4HANA skills and experience, although this was a bigger issue in the early years. Many S/4HANA customers must engage with third-party systems integrators to deploy and manage the environment, which can increase costs. The complexity of S/4HANA also increases the risk of failed implementations if the projects are not managed properly or requirements are not properly defined.

S/4HANA deployment

S/4HANA was developed as an on-premises software system but can also be deployed in a variety of cloud scenarios. Most customers who move to the cloud choose to deploy SAP S/4HANA Cloud, which is essentially a different product than standard S/4HANA. The initial versions of S/4HANA Cloud had significantly fewer capabilities than S/4HANA, but the feature sets have become more similar with subsequent releases.

The standard on-premises S/4HANA can be deployed in a private cloud environment hosted on the servers of a cloud service provider. This option is usually chosen by companies that want some of the advantages of cloud computing, such as no longer needing to manage infrastructure, without having to share the cloud environment with other instances of the ERP system.

S/4HANA can also be deployed in hybrid environments in which some instances run on hosted cloud infrastructure and others run on premises. This deployment option is usually chosen by companies that have security or data governance requirements.

How to implement S/4HANA

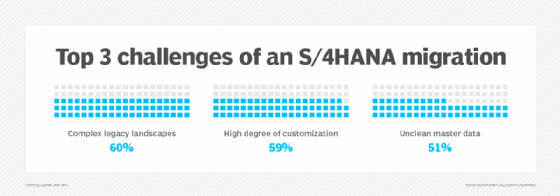

Whatever deployment method a company chooses, implementing S/4HANA is a complex, time-consuming and costly process. Most S/4HANA customers will replace existing SAP ECC systems, but a migration to S/4HANA is different from a standard version upgrade. Indeed, in many cases an S/4HANA migration is more like a new software implementation than an upgrade.

Because S/4HANA has a simplified data model and includes many different functions than SAP ECC, it requires a company to rethink and redesign its business processes to take advantage of S/4HANA's advanced capabilities.

Most SAP ECC systems have been heavily customized, with thousands of specialized functions developed to meet a company's internal requirements or those of its particular industry. Many of these custom functions are not needed because S/4HANA includes them as standard functionality. This means that before beginning an S/4HANA implementation, companies should thoroughly examine all their processes to understand how they can be best designed for S/4HANA and eliminate functions that may not be needed.

When embarking on an S/4HANA implementation, organizations can take either a brownfield or greenfield approach. In a brownfield implementation, a company takes its existing SAP landscape and transfers it largely wholesale to S/4HANA. This means that the company continues to use at least some legacy functions. A brownfield implementation is usually less disruptive and time-consuming than a greenfield approach, but the company may not get all of the transformational value of moving to S/4HANA.

A greenfield implementation involves installing and configuring S/4HANA in a completely new environment. Companies need to redesign entire processes for a greenfield approach, making it more disruptive, costly and time-consuming than a brownfield approach, but when completed it provides all of the advantages of S/4HANA's modern ERP capabilities.

Managing data is a major part of any S/4HANA implementation, regardless of the approach a company takes. Data that's being moved into the new system needs to be prepared for S/4HANA's simplified data model.

SAP S/4HANA Cloud

In March 2017, SAP released S/4HANA Cloud, a multi-tenant SaaS version of S/4HANA. S/4HANA Cloud is best suited for organizations of 1,500 employees or more that may want to run a two-tiered ERP system, in which a corporate entity runs a full business suite -- such as ECC or S/4HANA -- on premises and implements S/4HANA Cloud at the division or subsidiary level.

S/4HANA Cloud includes next-generation technology like machine learning through a tool called SAP Clea, and a conversational digital assistant bot called CoPilot.

Like most SaaS applications, S/4HANA Cloud has a new edition released every quarter. The naming conventions follow the on-premises model of year and month of release, so SAP S/4HANA Cloud 1709 was released in September 2017.

SAP S/4HANA embedded analytics

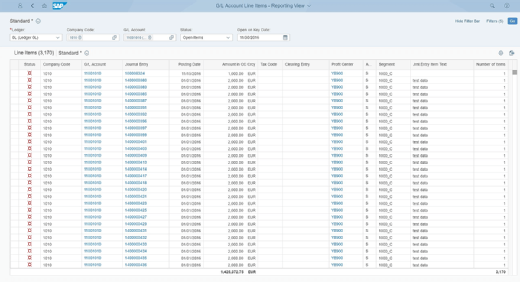

S/4HANA includes embedded analytics that allow users to perform real-time analytics on live transactional data.

This is done through Virtual Data Models, prebuilt models and reports based on SAP HANA Core Data Services that analyze HANA operational data without requiring a data warehouse. The analytics functions come with S/4HANA software and do not require separate installation or licenses.

SAP S/4HANA roadmap

A major release of the on-premises S/4HANA and S/4HANA Cloud comes out once a year. The product-naming convention combined the year and month of release until the 2020 release, when it was changed to only the year.

As of 2022, the S/4HANA releases were the following:

- 1511 -- released November 2015

- 1610 -- released October 2016

- 1709 -- released September 2017

- 1809 -- released September 2018

- 1909 -- released September 2019

- 2020 -- released 2020

- 2021 -- released 2021

- 2022 -- released 2022

Each S/4HANA release includes new capabilities and extensions to the software's functionality. In addition to the new functional capabilities, recent S/4HANA releases have added cloud integration with SAP cloud products (SAP Ariba, SuccessFactors, Fieldglass and Concur) and other enterprise systems; and embedded emerging technologies in more functional modules, including AI, machine learning, IoT, advanced analytics and blockchain in more LOB modules.